Designing Human-in-the-Loop AI Systems for Anti-Financial Crime

Applying AI to regulatory intelligence, risk analysis, and compliance workflows, without compromising accuracy, auditability, or control

Overview

Financial institutions are under increasing pressure to adopt AI to improve efficiency in compliance operations.

In Anti-Financial Crime (AFC) environments—spanning AML, sanctions, and regulatory oversight—automation introduces operational and regulatory risk when applied without structure.

This work explores a different approach:

designing AI systems that operate within governed workflows, where human validation is a required component of system function.

The goal is not full automation, but human-automation collaborative systems that:

Improve analytical efficiency;

Maintain regulatory accuracy;

Preserve institutional knowledge;

Ensure outputs remain traceable and reviewable over time.

The system supports AFC teams by:

Validating regulatory requirements against live sources.

Aligning internal policies and procedures.

Identifying gaps and emerging risks.

Supporting structured analysis and drafting.

Generating training and knowledge artifacts.

My Role

This work focused on translating AI capabilities into systems that are:

Reliable under constraint

Governable at scale

Auditable in practice

I led the design of this system as part of consultancy work focused on applying human-centered design to digital transformation in regulated environments.

My responsibilities included:

Defining system architecture and end-to-end workflows.

Identifying failure modes and regulatory risks.

Designing HITL governance structures.

Developing testing and diagnostic frameworks.

Modeling system behavior over time.

Context

AI adoption in AFC workflows is typically framed around automation and cost reduction.

The issue is not whether AI should be used.

It is how it is structured, governed, and validated, over time.

This assumes repetitive work can be safely removed without impacting system integrity or the development of future subject matter expertise.

In regulated environments, this assumption breaks down.

These tasks are not expendable—they are the training ground through which analysts build judgment, context, and regulatory fluency.

Removing them introduces long-term knowledge risk alongside immediate operational risk.

Automation also introduces additional failure modes:

Degraded analysis over time.

Incorrect or unverifiable citations.

Missed regulatory changes.

Loss of contextual judgment.

False confidence in outputs.

In AFC, failure is not symmetrical. Missing risk is significantly more costly than inefficiency.

Service Strategy

Human-in-the-loop is not a safeguard, it is a structural requirement.

AI augments human expertise; it does not replace it. All system outputs are advisory. Human validation is required for use.

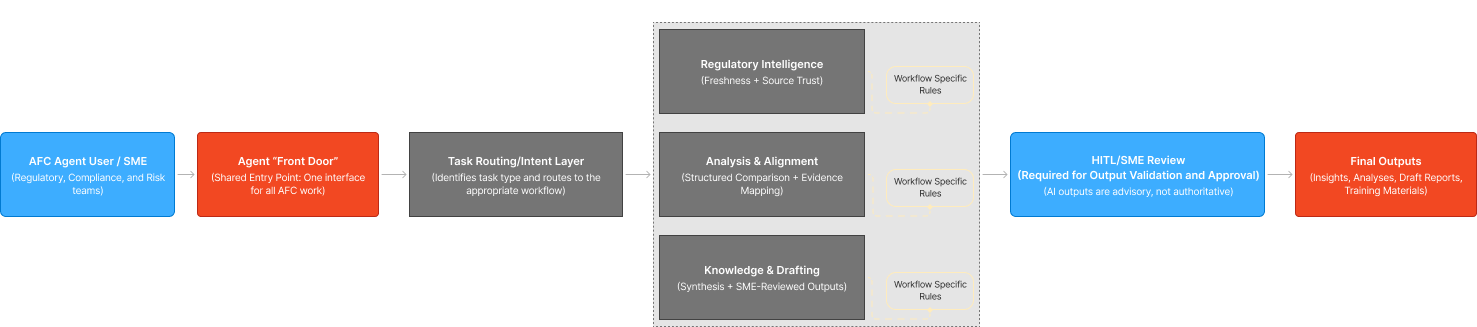

One Front Door, Differentiated Workflows

The system operates through a single entry point with differentiated task pathways.

Each workflow is designed with:

Workflow-specific validation logic.

Explicit constraints.

Defined review expectations

This reduces task drift and ensures consistent system behavior over time.

A single interface with differentiated workflows improves consistency and reduces system drift.

Core Use Case

Regulatory Intelligence and Risk Analysis Support.

The system supports AFC teams in monitoring regulatory changes, assessing policy alignment, and identifying emerging risks.

It enables structured, efficient analysis while maintaining full human accountability.

The system does not automate compliance reporting.

The system accelerates analysis and improves visibility—without removing human accountability.

SYSTEM Snapshot

A high-level view of how the system operates.

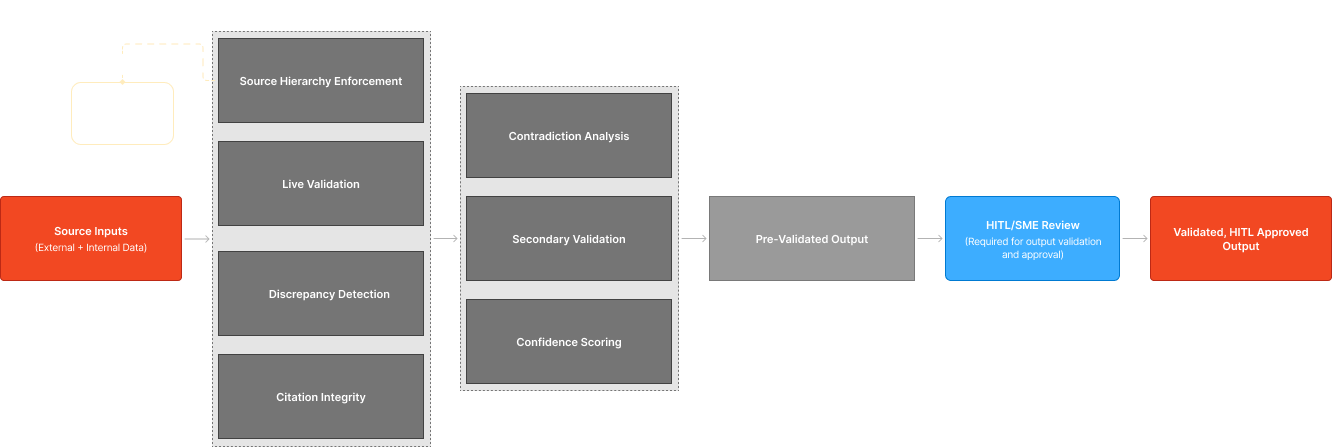

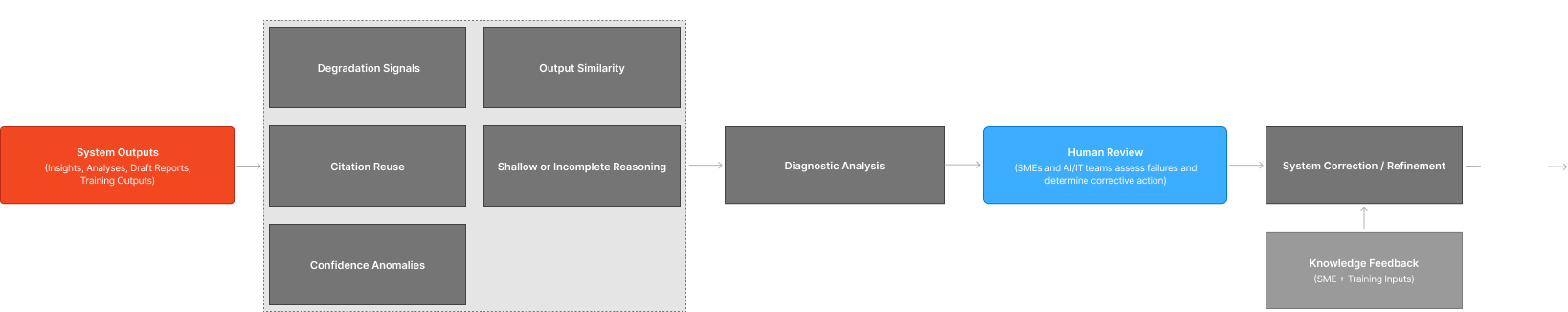

The diagrams featured below represent a core layer of system behavior, from task execution to validation, governance, and risk management.

End-to-end workflow: how AFC tasks move through structured, governed workflows–from intake to validated output.

SELECT SYSTEM COMPONENTS

System Overview: End-to-End Workflow

Verification + Cross Check

Detecting system drift, degradation, and performance breakdowns over time.

HITL Governance Model

Embeds human review as a required system function, ensuring outputs are validated, contextualized, and approved before use.

Risk Degradation + Monitoring

Detects system drift, performance degradation, and emerging failure patterns.

Continuous testing and diagnostics validate reliability over time, triggering intervention before risk escalates.

OUTCOME

This system demonstrates how AI can be deployed in regulated AFC environments to:

Improve efficiency without sacrificing accuracy.

Maintain compliance and audit readiness.

Reduce operational and systemic risk.

Preserve institutional knowledge.

Support long-term resilience.

The impact is structural:

improving how decisions are made, validated, and sustained over time.

What This APPROACH Demonstrates

The most effective systems are not those that move fastest, but those that operate reliably under constraint.

This work demonstrates that:

AI is a system design problem, not a feature.

Human oversight is a structural requirement.

Reliability requires continuous testing and diagnostics.

Multiple signals are required to evaluate system performance.

User feedback is a critical system input.

System design is a risk-reduction function.